The rise in Large Language Model (LLM) capabilities is undoubtedly transforming business operations. From streamlining customer interactions to complex content creation, AI innovations are at the forefront of digital transformation. With a wide range of models to choose from, businesses must take a crucial decision : which LLM is best fit for their strategic objectives? To this end, two key models will be examined. OpenaAI’s latest relase GPT-4o, and the rapidly emerging DeepSeek-R1.

Architectural Nuances and Performance Benchmarks

GPT-4o, with its robust "dense model" architecture, excels in tasks requiring deep contextual understanding. This translates to superior performance in applications demanding text generation and comprehensive data analysis. Conversely, DeepSeek-R1's "Mixture of Experts" (MoE) architecture prioritizes efficiency, dynamically allocating computational resources to optimize task-specific performance. This design choice produces impressive results, demonstrating its ability for analytical tasks, especially coding and logical inference.

Use Cases and Deployment Considerations

The selection between these LLMs hinges on specific enterprise needs. GPT-4o is ideally suited for applications prioritizing speed and real-time responsiveness, such as dynamic customer support systems or rapid content creation platforms. GPT-4o is suitable for applications that need real-time responsiveness, such as dynamic customer support systems or rapid content creation. With its emphasis on transparency and explainability, DeepSeek-R1 finds a place in industries that require analysis and audit trails, such as finance or legal services.

Within Turkish Technology, over 100 AI projects have been developed, leveraging advancements and integrating Deepseek-R1 14B and GPT-4o models. These models were tested in various applications, demonstrating competitive performance in different domains.

Projects:

Classification of IFE Surveys:

We are automating the emotional state analysis and classification of IFE surveys conducted via in-flight screens to accelerate customer satisfaction actions.

In this project, for 100 samples, the cloud-based GPT-4o model gave 87% accuracy, while the DeepSeek-R1-14B model was analyzed with 80% accuracy in samples. It was determined that Deepseek performed better emotional analysis compared to GPT-4o in some samples.

Online Information Library Chatbot:

The chatbot we developed for the online information library service, which is actively used by Turkish Airlines call center teams, answers questions about documents and increases employee productivity. In the test study, the accuracy rates (91%) of the answers given by both models to the questions were at similar levels.

Labeling of Aircraft Component Failures:

In a project where aircraft component failures were labeled, the accuracy rates of the on-premises DeepSeek-R1 and the cloud-based GPT-4o were found to be very close, at 44.5% and 46.5% respectively. This highlights the similar performance of both models in such specialized tasks, though improvements are needed for better understanding of technical aviation terminology.

Translation of Customer Feedback:

In order to respond to customer complaints and requests in more than 10 different languages, we perform the translation of complaint texts using large language models. In the tests conducted on GPT4o and Deepseek, it was observed that DeepSeek mixed up Chinese characters or left the parts it could not translate as they were, while transferring the parts it could translate to Turkish. In general, it was analyzed that it fell far behind GPT-4o in terms of translation from foreign languages to Turkish.

General Evaluation:

GPT-4o: It stands out with its wide context window and fast response times, ideal for applications where speed and comprehensive information access are important.

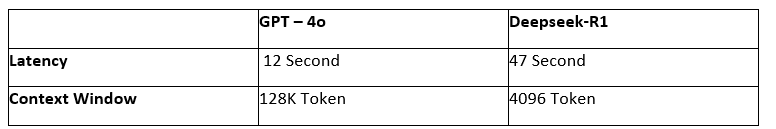

The comparison of Response Time (Latency) and Context Window is as follows:

GPT-4o’s response time is ~3.9 times lower, which is an advantage in real-time applications. Having a context window that is ~32 times larger also allows the model to better process long inputs, preserve context, and use complex information effectively.

Ethical and Security Dimensions

As LLMs become increasingly integrated into business, ethical and security considerations become increasingly important. GPT-4o has a filtering mechanism in terms of content security and ethical compliance with its closed-source structure. The model includes various measures to minimize the production of harmful, misleading or unethical content and is supported by moderation policies. DeepSeek-R1's open-source model, while offering greater flexibility while allowing users to customize the model to a greater extent. DeepSeek’s security policies require additional oversight mechanisms to ensure that use of the model remains ethical and are more committed to user accountability.

Cost and Accessibility

A significant factor in LLM deployment is cost. GPT-4o, being a cloud-based service, incurs API costs that can accumulate with high-volume usage. DeepSeek-R1's open-source nature offers a compelling alternative, enabling on-premises deployment and potentially lower operational expenses.

In Conclusion:

The choice between GPT-4o and DeepSeek-R1 is not a one-size-fits-all scenario. While GPT-4o is preferred for speed-focused, high-context applications, DeepSeek-R1 is preferred by organizations that prioritize transparency and cost-effectiveness. Enterprises must carefully evaluate their unique requirements to make informed decisions that drive optimal outcomes.