Large Language Models (LLMs) like DeepSeek, Mistral, and LLaMA have gone from research labs to real-world applications — powering chatbots, search engines, personal assistants, and enterprise AI tools.

But getting these models into production isn’t a plug-and-play operation. It involves critical architectural decisions — especially around LLM deployment strategies.

In this article, we’ll explore the most common LLM deployment methods, compare them side by side, and help you decide which to use and when — with visuals, real-world use cases, and performance data.

Created by AI

Created by AI

1. First Question: Cloud or Self-Hosted?

Before choosing a deployment method, answer this:

Do you want to use a hosted API (cloud-based) or run the model on your own infrastructure (self-hosted)?

Cloud-Based (OpenAI, Gemini, Anthropic, etc.)

Pros:- No setup required

- State-of-the-art models (GPT-4, Claude 3, Gemini Pro)

- Easy scaling, managed services

- Pay per usage (token-based pricing)

- Your data is sent to third-party servers

- Limited customization

Best for: MVPs, rapid prototyping, startups with limited infra, or when you need best-in-class models without worrying about hosting.

Self-Hosted (vLLM, Ollama, TGI)

You run the model on your own GPU server or local machine.

Pros:- Full control over data and models

- Potentially cheaper at scale

- Offline and private use

- Requires strong hardware (especially for large models)

- Setup, maintenance, and updates are your responsibility

2. Key Deployment Options

🧠 vLLM (Virtual Large Language Model Server)

- HuggingFace-compatible

- Built for performance (uses PagedAttention)

- GPT-compatible APIs (/chat/completions)

- Handles hundreds of concurrent requests

💻 Ollama

- CLI tool for running quantized models locally

- Uses GGUF format (efficient for CPU/GPU)

- Extremely lightweight & fast to set up

🌐 TGI (Text Generation Inference)

Created by AI

Created by AI

3. Practical Usage Examples

Here are quick, real-world commands to help you get started with each deployment method:

▶️ Run Mistral Locally Using Ollama

ollama run mistral

Runs a quantized Mistral model on your local machine in seconds — no extra setup required.

⚙️ Serve a GPT-Compatible API with vLLM

python -m vllm.entrypoints.openai.api_server \ --model facebook/opt-1.3b

Launches a high-performance OpenAI-style API endpoint using vLLM with an OPT 1.3B model. Compatible with /chat/completions.

🐳 Deploy Mistral via Docker with TG

docker run -p 8080:80 \ ghcr.io/huggingface/text-generation-inference \ --model-id mistralai/Mistral-7B-Instruct-v0.1

Creates a full REST API endpoint with HuggingFace’s TGI, ready to serve the Mistral-7B-Instruct model.

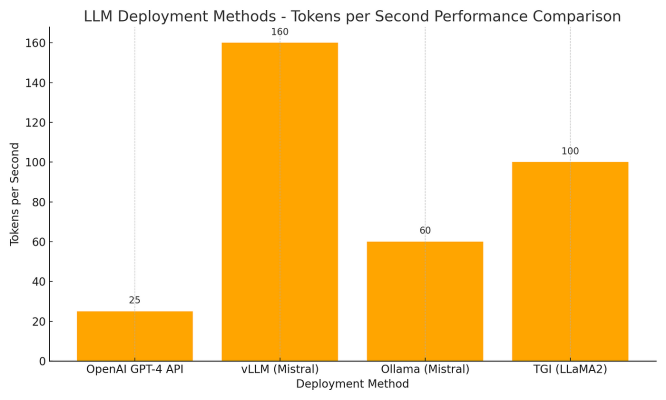

4. Performance Comparison: Tokens per Second

Here’s an example of average token generation speed for different platforms:

🟢 vLLM shines in terms of throughput 🟠 Ollamais fast enough for local use 🔵 OpenAI API provides convenience but is slower due to network/API latency

Created by AI

Created by AI

5. Memory Usage

Local memory (RAM) required to run these models efficiently:

- OpenAI: 0 GB (runs in the cloud)

- vLLM: 18 GB (suitable for 7B+ models)

- Ollama: Lightweight (8 GB for 7B GGUF)

- TGI: Moderate to high, depending on quantization

6. Max Concurrent Requests

Critical for production systems with heavy traffic:

vLLM offers industry-grade scalability, while Ollama is ideal for personal apps or low-traffic internal tools.

7. Final Thoughts: Flexibility Is the Key

There’s no one-size-fits-all when it comes to deploying LLMs.

👉 If you’re building a simple app, an API might suffice. 👉 If you’re scaling traffic, vLLM could save you thousands. 👉 If you want full privacy or need an offline tool, Ollama is a fantastic choice.

Know your use case. Control your costs. Optimize for performance.

Your Turn 🚀

Which deployment method have you used? What worked, what didn’t?

Drop your thoughts or questions in the comments — We’d love to hear your experience!

Click here for the medium page.